Amid Africa’s highly anticipated 2024 election season, the continent is on high alert for potential surges in disinformation campaigns.

With many countries preparing for crucial polls, the digital landscape has become a battleground for shaping public opinion on key electoral issues.

Malicious actors or accounts are orchestrating coordinated disinformation campaigns to influence voter sentiment and shape narratives.

For instance, during the 2023 elections in the Democratic Republic of the Congo (DRC), Code for Africa (CfA) flagged disinformation campaigns that aimed to undermine the credibility of the independent Congolese National Electoral Commission and its ability to ensure fair and free polls.

This flood of false information led to widespread online scepticism, with voters expressing concerns about alleged irregularities in certain regions.

Additionally, candidates from various political backgrounds exploited hashtags to spread misinformation and tarnish their opponents’ reputations.

To counter such tactics, voters must be able to identify malign actors or accounts and recognise coordinated electoral disinformation campaigns. This empowers individuals to critically assess online information, enabling them to make informed decisions based on accurate and reliable sources rather than being swayed by deceptive narratives.

CfA has compiled a guide to assist citizens in identifying suspicious accounts and coordinated disinformation campaigns.

This guide will help voters to navigate the deluge of electoral disinformation on social media platforms.

1. Suspicious timing of online posts by multiple accounts

When several similar posts on a specific topic pop up almost simultaneously or very close together, it raises red flags about potential coordination by the accounts behind them.

In normal online discussions, posts about a particular subject appear gradually over time as users share their thoughts independently. But if you notice a sudden flood of the same posts on a single topic within a short timeframe, it suggests a level of organisation uncommon in natural conversations.

For example, on 17 January 2024, a network of seven Facebook pages based in Mali coordinated to amplify a live broadcast focusing on Russian cooperation with Alliance of Sahel States (AES) countries.

The broadcast aimed to paint Russia in a positive light by highlighting Moscow’s efforts to promote unity through collaboration between the Economic Community of West African States (Ecowas) and AES countries to foster peace in the Sahel region.

CfA discovered that the network also boosted other posts emphasising Russian-Malian cooperation, including projects in Mali supported by Russia.

The seven Malian Facebook accounts, which primarily identified themselves as news outlets and personal blogs, posted about a topic titled “Russia asks Ecowas to discuss peace in the Sahel with the AES countries” and received 1,936 views.

The first Facebook page initially shared this post at 22:57:34 CAT on 17 January 2024. Within a mere six seconds, six other Facebook pages published the same post, at 22:57:40 CAT on the same date.

/file/dailymaverick/wp-content/uploads/2024/03/unnamed-1.jpg)

2. Accounts copy-pasting the same post

Coordinated disinformation campaigns often flood social media platforms with large amounts of content, frequently sharing identical text or links. This is not the usual pattern for social media posts and raises suspicions of coordinated efforts.

The consistent repetition of a particular narrative aims to give the impression that the message is widely accepted, thus increasing the likelihood of positive audience reception.

For instance, on 23 November 2023, CfA identified 13 Facebook posts that used the copy-paste technique to amplify claims that donations from former DRC presidential candidate, Moïse Katumbi, for displaced people in North Kivu, DRC, were obstructed by the provincial government.

These accounts were primarily managed from within the DRC and received 3,249 interactions.

/file/dailymaverick/wp-content/uploads/2024/03/unnamed-2.jpg)

3. Accounts with suspicious posting history

To identify coordinated disinformation campaigns, it is crucial to examine the posting history of a few accounts making the same suspicious claims.

By clicking on these accounts and analysing their past activity, you can determine if they consistently endorse a specific political agenda.

If these accounts primarily focus on one topic, they may be coordinating to amplify a particular agenda or narrative. Although genuine individuals may express strong support for a political cause, it is rare for their entire posting history to be dedicated solely to that agenda.

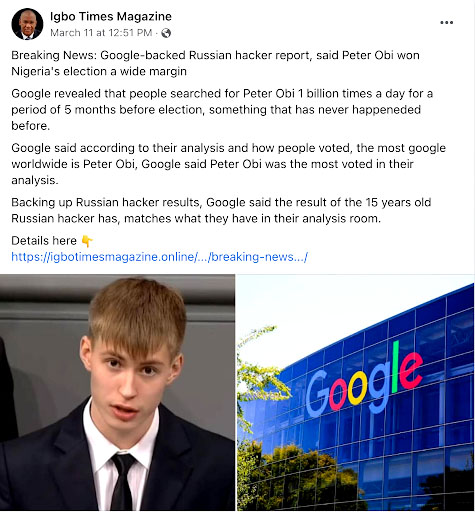

For instance, in the aftermath of the Nigerian presidential elections in February 2023, widespread doubts surfaced regarding the election results.

On 11 March 2023, two Facebook pages, Igbo Times Magazine and Express, which regularly share content related to Biafra, made allegations about a “Google-backed” Russian hacker targeting the Independent National Electoral Commission’s elections database.

These accounts claimed that presidential candidate, Peter Gregory Obi, had won against the declared president-elect, Bola Ahmed Tinubu. A look at the posting history of these accounts revealed a pattern of posting disinformation while promoting Biafra-related content.

/file/dailymaverick/wp-content/uploads/2024/03/Screenshot-2024-03-05-at-16.27.24.png)

4. Accounts use identity signals in their posts

To identify coordinated campaigns, it is essential to examine whether the posts use clear identity signals, particularly those related to politics.

These signals often manipulate perceptions, portraying a coordinated effort as a spontaneous and widespread movement. Examples include displaying national flags or employing divisive political hashtags, like #ZanuPfMustGo.

During the pre-election period in Zimbabwe in 2023, the hashtag #ZanuPfMustGo gained prominence as one of the main hashtags used by opposition parties, including the Citizen Coalition for Change.

Its primary purpose was to criticise and disseminate claims about the shortcomings and failures of Zanu-PF, the current ruling party.

/file/dailymaverick/wp-content/uploads/2024/03/unnamed-5.jpg)

/file/dailymaverick/wp-content/uploads/2024/03/unnamed-6.jpg)

5. Fake accounts or impersonation

Fake accounts or impersonation involve the creation and use of fictitious online personas that mimic real individuals or organisations.

Coordinated efforts use these deceptive profiles to disseminate false information, manipulate public opinion, or amplify particular narratives.

These fake accounts often present themselves as genuine users, adopting convincing details such as profile pictures, bios and engagement with other users to establish credibility.

During the 2022 Kenyan elections, there was a noticeable use of sock-puppet accounts impersonating prominent and influential politicians.

These accounts would post controversial and unverified information, often containing disinformation and incendiary content, with the aim of going viral and increasing their follower count.

Unfortunately, many users targeted by these accounts were unable to tell that the posts were fake.

As a result, they fell victim to this misinformation either by believing it or sharing it, thus influencing offline behaviour.

The fake accounts predominantly impersonated three influential figures, particularly during the period surrounding the elections: Independent Electoral and Boundaries Commission (IEBC) commissioner Juliana Cherera, IEBC chairperson Wafula Chebukati, and Jubilee Party vice-chairperson David Murathe.

/file/dailymaverick/wp-content/uploads/2024/03/unnamed-8.jpg)

Free and fair elections are essential to democracy.

However, malicious actors are increasingly leveraging digital tools to influence electoral outcomes.

According to experts, there is a prevalent misconception that African countries are incapable of sophisticated disinformation campaigns on social media.

They warn that such misconceptions underestimate the capabilities of political parties and online actors on the continent and overlook the extent to which individuals are willing to manipulate online platforms to advance their agendas.

Additionally, media watchdogs also emphasise it is important for digital citizens to be informed about how to engage responsibly online, particularly on social media platforms. DM

First published by Code for Africa

Co-written by iLAB deputy manager Mitchelle Awuor and iLAB investigative analysts Anita Igbine and John Ndung’u. Edited by iLAB insights manager Nicholas Ibekwe and iLAB copy editor Theresa Mallinson.

Find out how to identify the digital deceptions used to derail electoral integrity as African countries prepare for crucial polls. (Image: Code for Africa)

Find out how to identify the digital deceptions used to derail electoral integrity as African countries prepare for crucial polls. (Image: Code for Africa)