My son Joseph works in AI in the Netherlands after having finished his graduate degree there. On a recent visit to Amsterdam he scolded me for my recent reporting on space-based data centres. From where he sits, in the centre of industry, surrounded by serious techies, the whole enterprise looks like a boondoggle. Too many challenges. Too much hype. IPO pumping. Future financial disasters and engineering failures a foregone conclusion. I was not being critical enough, he chided.

So I have gone into the weeds again, with a more jaundiced eye.

The orbital data centre pitch is truly seductive. AI is eating energy at a terrifying rate and energy is now civilisation’s scarcest resource. Data centres will consume roughly 9% of all US electricity by 2030. Hyperscalers (the data centre giants) are buying nuclear plants. In space, by contrast, you have unlimited solar energy (at about eight times the energy efficiency of Earth-based solar), no water bills, no grid queues, no cost of land, no noise. Put your data centre up there and you solve everything at once.

If the architecture, physics, operating processes and costs were all properly understood, then yeah, a no-brainer. But there is a pile of unknowns, perhaps high enough to be unscalable.

Let’s start with the most obvious paradox. Space is cold (near absolute zero in the void) but it is almost impossible to cool anything there. Like silicon chips, which can get fierce-hot when “thinking”. On Earth, air and liquid carry heat away from chips. In orbit, there is no air and no practical liquid coolant. Every joule of thermal energy produced by a graphics processing unit (GPU) must be radiated away as infrared light. That is the only option physics permits.

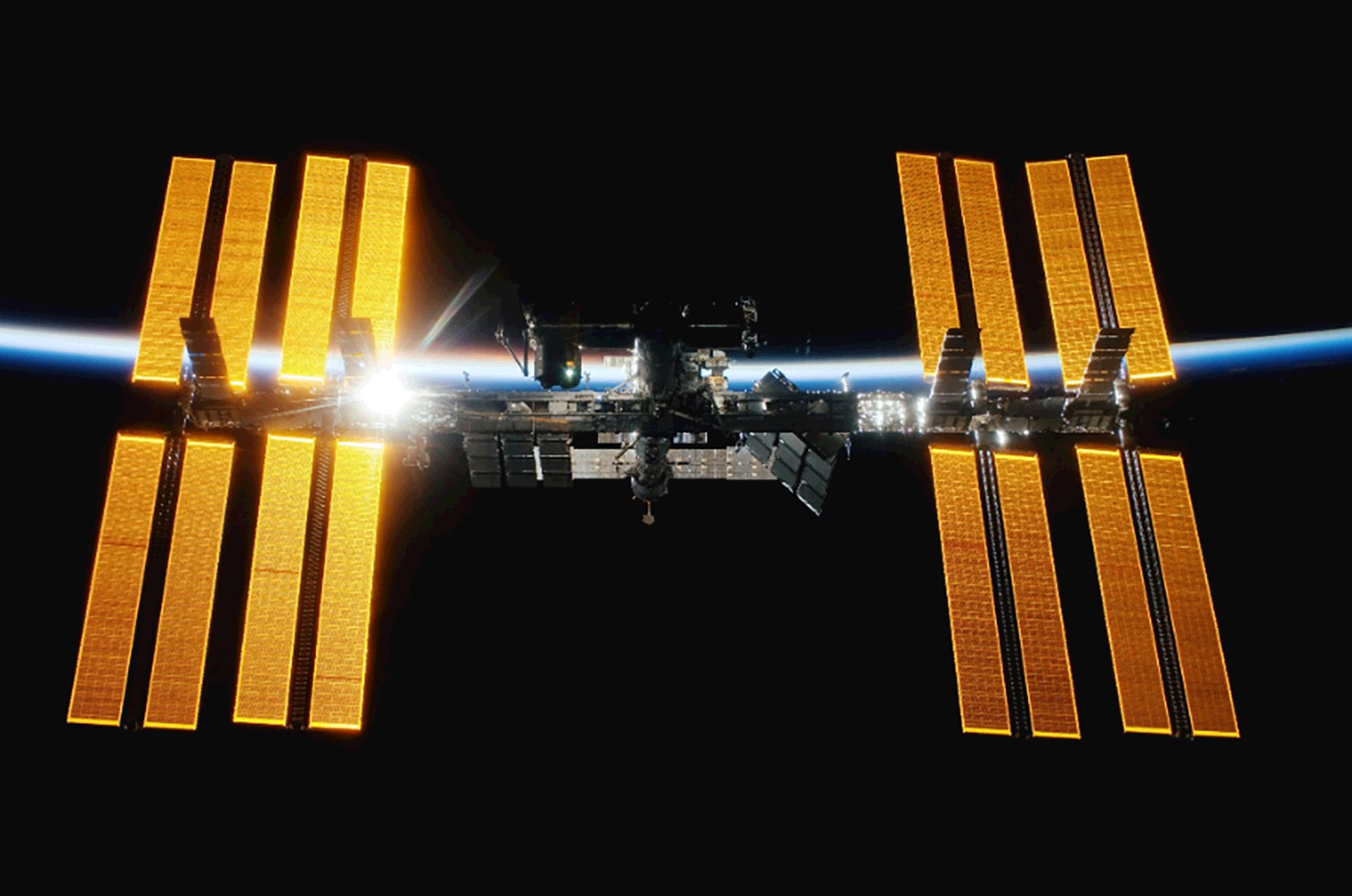

Nvidia’s Jensen Huang, who knows his way around a hot chip, put it plainly in his Nvidia Q4 fiscal year 2026 earnings call on 25 February: “It’s cold in space, but there’s no airflow, and so the only way to dissipate is through conduction.” The International Space Station, the most expensive structure humans have built, manages to radiate about 70 kilowatts (kW) of heat using 422 square metres of ammonia-loop radiators. A single rack of modern H100 GPUs generates roughly 80kW. It takes 422m2 of radiator to cool one single GPU rack, and data centres have thousands of racks (and tens of thousands for the biggest of them). Think about that ratio for a moment. The calculus is daunting.

To cool a meaningful AI cluster – say, 1,000 H100s – you need a radiator structure roughly the size of a football field, folded origami-style for launch and deployed in orbit. I looked into this, and there are indeed start-ups working on the origami solution. But the radiator also has to point away from the sun at the time, while the satellite itself is spinning through light and shadow 16 times a day, with temperature swings from +120°C to –250°C on every orbit. So many engineering hurdles to clear.

Some emerging solutions do exist – deployable carbon nanotube radiators, loop heat pipes, even liquid droplet radiators that spray microscopic fluid droplets into space and collect them on the other side. But none of these has been proven at the power densities that AI training or inference demands. Starcloud, the Y Combinator-backed start-up that put the first Nvidia H100 into orbit in November 2025, is using passive radiative cooling on its demonstration satellite. Their proposed gigawatt-scale “Hypercluster” is a fundamentally different beast, and the thermal engineering at that scale remains largely theoretical.

Meanwhile, the radiation environment of low Earth orbit destroys hardware. Cosmic rays and solar protons cause two categories of damage: single-event upsets like bit flips that corrupt calculations in real time or cumulative analogue degradation that gradually shifts transistor thresholds until chips become unreliable and then useless. On Earth, a GPU training run lasts two or three years before the hardware is economically obsolete. In orbit, radiation may cut that to far less.

The traditional aerospace solution is radiation-hardened chips. The problem is that “rad-hard” silicon is a decade or more behind consumer technology. You cannot train a frontier AI model on hardware from 2010. Google has been testing its TPU (tensor processing unit) v6 chips in proton beams simulating five years of LEO exposure, and the results are cautiously encouraging. The chips survived roughly three times the expected radiation dose. But memory chips are a different story, and memory is where large model weights live.

The alternative is running commercial GPUs with triple modular redundancy, three copies of every chip running in parallel to catch errors, tripling your mass, launch costs, power draw and cooling requirements simultaneously. It is a solution that eats its own savings.

None of this matters unless getting hardware to orbit becomes dramatically cheaper. Today, SpaceX charges roughly $2,900 per kilogram to low Earth orbit on Falcon 9. Google’s own feasibility study concluded that orbital data centres only become cost-competitive when launch costs fall below $200 per kilogram, a figure they project Starship might reach around 2035, assuming 180 launches per year by then.

Starship, if it achieves full reusability at scale, could theoretically hit $67 to $100 per kilogram. That would be transformative. But Starship’s commercial orbital debut has already slipped three times in 2026, and serious analysts are now modelling a “plateau scenario” in which Falcon 9 pricing persists until 2028 or beyond. At current rates, the economics of orbital compute simply fail. A ground-based 40-megawatt AI data centre costs roughly $80-million over five years; a single Starship-equivalent payload at today’s prices runs $150-million to $200-million before you’ve cooled a single chip.

And then there is maintenance. On Earth, when a server board fails, someone replaces it in 20 minutes. In orbit, your options are: send a robotic servicing mission costing tens of millions of dollars, or write off the asset and keep paying for the launch that put it there. This is why most serious orbital data centre designs are, frankly, disposable – launched, operated for five years, deorbited, replaced. The concept of upgrading your chips, which hyperscalers do on roughly two-year cycles on Earth, becomes almost farcical in this context.

For AI inference, which is the part of AI that actually reads the prompts and responds to them, latency is everything. An orbital data centre in low Earth orbit sits 400km to 600km above Earth. Round-trip signal delay: 5 to 10 milliseconds. That sounds small but is enough to make real-time inference applications like financial trading essentially unworkable from orbit. The honest-use case for orbital compute is either batch AI training – long, energy-intensive jobs that don’t need to respond to anything in milliseconds – or slowish inference. That narrows the market considerably.

The moon, occasionally floated as a longer-term option for data centres, makes the latency problem dramatically worse – roughly 2.5 seconds round-trip – and the logistics of getting hardware there and back are orders of magnitude harder. It is, at best, a 2040 conversation about very specific use cases: processing data from lunar missions, perhaps, or sovereign compute nodes for nations planning a presence there.

But Musk has plenty of bedfellows in this race, all dreaming astonishingly ambitious and perhaps foolhardy dreams.

In addition to SpaceX and Starcloud, Google’s Project Suncatcher is targeting two prototype satellites in early 2027. Axiom Space launched its first dedicated orbital compute nodes in January 2026. Nvidia has a product line aimed explicitly at orbital data centres. And somewhere in the background, the Chinese space programme – which first conceptualised orbital data centres in 2011 – is pursuing its own roadmap with characteristic opacity.

The question of whether this is a genuine engineering programme or an investor narrative is uncomfortable but fair. Some of these companies are pre-revenue, operating on white papers and demonstration satellites, pitching a market that requires eight to 10 more years of infrastructure development before a dollar of real revenue appears. That is a familiar structure to anyone who lived through the satellite internet bubble of the late 1990s.

So, what’s the verdict?

Hard-bitten engineers who have thought about this (the ones who have actually tried to keep compute hardware alive in radiation environments) will tell you that orbital data centres at meaningful scale represent a convergence of unsolved problems that have never been simultaneously solved before. Thermal management at megawatt scale, radiation-hardened commercial silicon, sub-$200-per-kilogram launch costs, on-orbit maintenance, and competitive latency for inference: every one of these is a multiple-PhD-level challenge requiring both luck and genius. Solving all of them, concurrently, in a commercially viable timeframe is, well, a little rich.

Futurists (and there are brilliant ones among the believers) will point to the trajectory. Launch costs have fallen a hundredfold in 20 years. Commercial chip hardening is advancing. Deployable radiator technology is maturing. Every one of the engineering barriers is soluble in principle. The question is not whether but when.

I asked Joseph to read this column before I filed it. He made the following point as someone for whom the future is longer term than mine. Let’s say Musk et al actually achieve their efforts by, say, 2040. By that time terrestrial nuclear and solar/battery technology will have dramatically improved. Will space-based data centres be competitive against that?

In any event, I suppose we need to conclude with Musk, who has pulled off things that serious people said were impossible, like reusable orbital rockets, mass-market electric vehicles and a global satellite internet service. He has also torched capital and credibility on timelines that bore no relationship to physics or manufacturing reality. With space-based data centres, he – and the industry gathering behind him – may be doing both things at once: pointing at something achievable, while pretending the road there is shorter and smoother than it actually is.

The stars, as ever, are patient. The investors will be too, at least at first, until they are not. DM

Steven Boykey Sidley is a professor of practice at JBS, University of Johannesburg, a partner at Bridge Capital and a columnist-at-large at Daily Maverick. His new book, It’s Mine: How the Crypto Industry is Redefining Ownership, is published by Maverick451 in South Africa and Legend Times Group in the UK/EU, available now.

The orbital data centre pitch is truly seductive, but there is a pile of unknowns, perhaps high enough to be unscalable. (Photo: Nasa for Unsplash)

The orbital data centre pitch is truly seductive, but there is a pile of unknowns, perhaps high enough to be unscalable. (Photo: Nasa for Unsplash)