“The old world is dying and the new world struggles to be born: now is the time of monsters.” – Antonio Gramsci, quoted by AI safety researcher Connor Leahy.

Way back in 1818, with the Industrial Revolution in full swing, Mary Shelley’s Frankenstein gave us a warning about creating more than you bargained for. In her story, a scientist’s bizarre experiment creates a sentient being, the tragic and angry Monster, who goes out of control.

Shelley provided the launch pad for many a sci-fi tale over the next two centuries as writers and filmmakers, unsettled by the changes being wrought by technology, cast their minds into a future in which intelligent machines surpass and even destroy us.

Cultural anxiety kicked up a notch with the idea that a self-improving artificial intelligence (AI), perhaps acquiring motives of its own, could run so far ahead of human abilities that, in the words of the mathematician John von Neumann in 1958, “human affairs, as we know them, could not continue”.

For decades, theorists have been wrestling with how to keep AI compatible with human values if it acquires superhuman intelligence. This “alignment problem” remains unsolved.

It has gained new urgency in recent months, with impressive real-life AI suddenly exploding into public view in the form of ChatGPT, Midjourney, Google’s Bard and others.

/file/dailymaverick/wp-content/uploads/2023/05/Don-The-AI-dilemma-2.jpg)

A surprising truth about today’s AI is that nobody, not even its creators, understands exactly how it achieves the feats it does, a peculiar feature of the technology. What goes on inside the system is opaque, leading to the description “black box”.

“I want to be very clear: I do not think we have yet discovered a way to align a superpowerful system [with human goals],” Sam Altman, CEO of OpenAI – creator of ChatGPT and Dall-E – told the podcaster Lex Fridman last month. “[But] we have something that works for our current scale.”

For now, that means keeping AI from being obviously biased or producing disturbing content. But some in the field are worrying about the future.

Eliezer Yudkowsky, who leads research at the Machine Intelligence Research Institute in the US, wrote in Time magazine recently: “Many researchers steeped in these issues, including myself, expect that the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die.”

He went on: “It’s not that you can’t, in principle, survive creating something much smarter than you; it’s that it would require precision and preparation and new scientific insights, and probably not having AI systems composed of giant inscrutable arrays of fractional numbers.”

On 1 May, Geoffrey Hinton (75), known as the “Godfather of AI” for his crucial pioneering work on neural networks and machine learning, shocked the AI world by announcing that he had resigned from Google so that he could freely sound a warning about the way the technology is going.

“Look at how it was five years ago and how it is now,” he told The New York Times. “Take the difference and propagate it forwards. That’s scary.

“It is hard to see how you can prevent the bad actors from using it for bad things.”

Not all researchers are so pessimistic. Many believe in a utopian outcome – AI that transforms productivity and wellbeing, ushering in an era of superabundance in which all the intractable human problems are solved.

Even the great physicist Stephen Hawking, known for his warnings about AI, sounded a note of optimism not long before his death in 2018: “Perhaps, with the tools of this new technological revolution, we will be able to undo some of the damage done to the natural world by the last one, industrialisation. We will aim to finally eradicate disease and poverty. Every aspect of our lives will be transformed.”

The problem, however, lies in getting from here to there. For now, the most material impact of AI on humans is likely to be in the workplace. AI tools are already being rolled out in businesses around the world. With AI taking over some of the labour, jobs are under threat.

Until the startling debut of the current crop of AI technologies, automation had been expected to replace mostly repetitive, low-skill work. But suddenly it turns out that skilled, even creative, work is looking just as vulnerable. Artists and writers are posting despairingly in public forums; programmers are wondering how long they’ll have jobs.

The likes of architects, engineers, radiologists, bankers and lawyers may be the next to feel threatened. In March, ChatGPT-4 passed the US bar exam for lawyers with marks in the top 10%. Only months earlier, the previous version had scored in the bottom 10%.

Estimates of the fallout on jobs vary widely. Economists at Goldman Sachs say the new wave of AI could affect 300 million mainly white-collar jobs around the world, taking over 18% of the work.

The World Economic Forum says that up to 30% of jobs in industrialised countries could be at risk by the mid-2030s, but points out that AI, like previous new technologies, will boost economic growth and create new jobs. How many? Who knows?

Disruption will extend beyond the workplace into culture, politics, security and warfare. AI is already able to produce convincing deepfakes of videos and images, such as the recent viral one of the pope in an absurd puffer jacket.

AI can mimic your voice, putting words in your mouth after sampling just three seconds of speech. It can muster persuasive arguments. It could power hordes of online bots to influence elections or sow dissent. New start-ups even offer to get AI to do your online dating for you, prompting a blogger to quip: “Eventually it’ll all be catfish catfishing catfish.”

Last month, a German magazine used AI to fake an interview with the stricken F1 great Michael Schumacher – a tasteless step further into the “post-truth” era.

/file/dailymaverick/wp-content/uploads/2023/05/Don-The-AI-dilemma-4.jpg)

AI will certainly transform battlefields: imagine coordinated swarms of drones and robots moving swiftly in ways incomprehensible to human soldiers. Sixty countries, including the US, South Korea and China, met in The Hague in February to discuss the issues raised by military AI, and Nato has just announced that it will seek an agreement with China on “responsible” uses of the technology.

Today’s most impressive AIs are large language models (LLMs), based on computing systems called neural networks, inspired by the connections in the brain.

The best-known of them, ChatGPT, gained 100 million users in just two months, dwarfing the growth rate of any consumer app in history.

To oversimplify, an LLM is created by coding a learning framework and then throwing enormous amounts of training data at it. What has emerged has surprised even the experts.

“This is one of the really surprising things, talking to the experts, because they will say, ‘These models have capabilities; we do not understand how they show up, when they show up, or why they show up,’” say Tristan Harris and Aza Raskin of the Center for Humane Technology in their presentation titled “The AI Dilemma”.

“You ask these AIs to do arithmetic and they can’t do [it], they can’t do [it], and they can’t do [it]. And at some point, boom, they just gain the ability to do arithmetic and no one can actually predict when that’ll happen.”

/file/dailymaverick/wp-content/uploads/2023/05/Don-The-AI-dilemma-3.jpg)

And not just arithmetic. In April, researchers from IBM, Samsung and the University of Maryland reported in Nature Communication that they had created an AI able to do high-level physics. Their AI “rediscovered Kepler’s third law of planetary motion ... and produced a good approximation of Einstein’s relativistic time-dilation law”.

Harris and Raskin give another example of the mysteriously emerging abilities of LLMs: “[They] have silently taught themselves research-grade chemistry. So if you go and play with ChatGPT right now, it turns out it is better at doing research chemistry than many of the AIs that were specifically trained for doing research chemistry.”

Which brings us to a key concept in the (possible) future of AI: artificial general intelligence, or AGI, which would not only be good at one kind of task. It would be as versatile as the human mind – and potentially vastly smarter. Even a slightly superhuman AGI would do better than humans at upgrading itself, setting off a machine-speed spiral of self-improvement with no known limits. Theorists call this moment “the technological singularity”. The world beyond the singularity is anyone’s guess.

Nobody knows whether AGI is possible, but few are betting against it. Altman, the OpenAI CEO, said: “When we started [in 2015] saying we were going to try to build AGI … people said it was ridiculous. We don’t get mocked as much now.”

In London’s Financial Times recently, the AI pioneer and investor Ian Hogarth wrote: “A three-letter acronym doesn’t capture the enormity of what AGI would represent, so I will refer to it as what it is: God-like AI.

“To be clear, we are not here yet. But the nature of the technology means it is exceptionally difficult to predict exactly when we will get there. God-like AI could be a force beyond our control or understanding.”

He described an encounter with a leading AI scientist: “The important question has always been how far away in the future [AGI] might be. The AI researcher did not have to consider it for long. ‘It’s possible from now onwards,’ he replied.”

Hinton, the “Godfather of AI” mentioned earlier, told The New York Times: “The idea that this stuff could actually get smarter than people – a few people believed that. But most people thought it was way off. And I thought it was way off … Obviously, I no longer think that.”

Whether God-like AI shows up next week or never, the hurtling advances of the current AI generation will change the world in profound ways, for good or ill. Harris and Raskin say that AI is now progressing at an almost unimaginable “double exponential” rate – an exponential curve on top of another exponential curve.

And Hogarth pointed out: “The [amount of computing power] used to train AI models has increased by a factor of one hundred million in the past 10 years.”

Some experts believe that humanity needs to put the brakes on AI until it figures out how to deal with its impacts before AI races too far ahead, driven by intense commercial competition.

/file/dailymaverick/wp-content/uploads/2023/05/Don-The-AI-dilemma-MAIN.jpg)

Last month Harris and Raskin were among 1,100 initial signatories – along with Apple cofounder Steve Wozniak, Massachusetts Institute of Technology AI Lab physicist Max Tegmark and SpaceX CEO Elon Musk – to an open letter calling on “all AI labs to immediately pause for at least six months the training of AI systems more powerful than GPT-4”. (The pessimist Yudkowsky did not sign, saying the letter didn’t go far enough.)

Musk, a now-rueful cofounder of OpenAI, has been a prominent voice calling for the regulation of AI. It has been slow in coming, although the EU, China, Brazil and possibly other countries are working on it. However, a technology that is developing so fast might make laws obsolete before the ink dries on them.

Yuval Noah Harari, the historian and futurist philosopher, wrote in The Economist late in April: “We can still regulate the new AI tools, but we must act quickly…

“AI can make exponentially more powerful AI. The first regulation I would suggest is to make it mandatory for AI to disclose that it is an AI. If I am having a conversation with someone, and I cannot tell whether it is a human or an AI – that’s the end of democracy.”

Harris said: “We’re talking about how a race … between a handful of companies [is pushing] these new AIs into the world as fast as possible. We have Microsoft that is pushing ChatGPT into its products ...

“We haven’t even solved the misalignment problem with social media. We know those harms, going back.

“If only a relatively simple technology of social media with a relatively small misalignment with society could cause those things ... second contact with AI [would enable] automated exploitation of code and cyber weapons, exponential blackmail and revenge porn, automated fake religions ... exponential scams, reality collapse.”

He said companies are “caught in this arms race to deploy it and to get market dominance as fast as possible. And none of them can stop it on their own.

“It has to be some kind of negotiated agreement where we all collectively say, which future do we want?” DM168

This story first appeared in our weekly Daily Maverick 168 newspaper, which is available countrywide for R25.

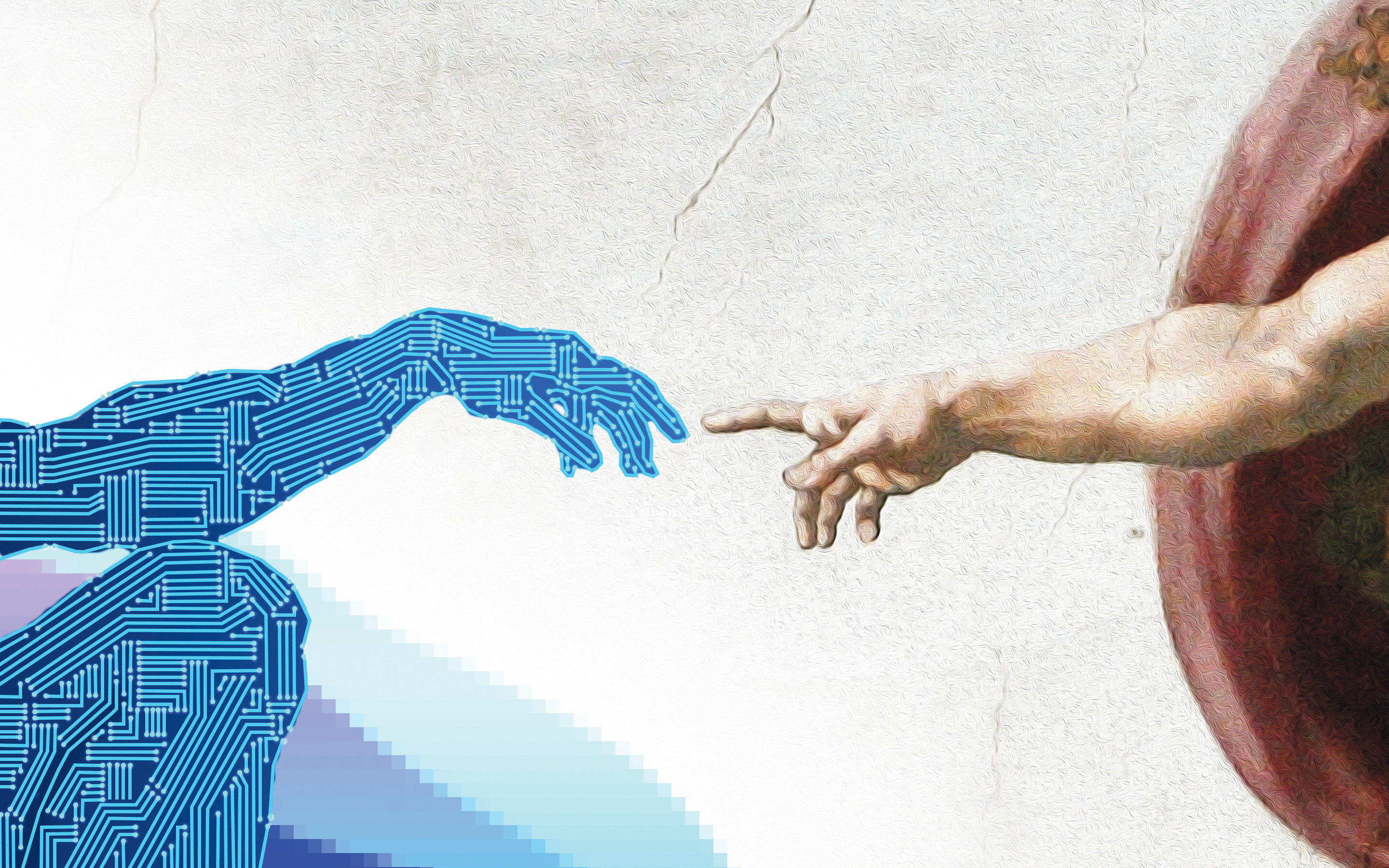

An artificially created ‘Adam’ awaits the touch of God’s finger. Graphic: iStock

An artificially created ‘Adam’ awaits the touch of God’s finger. Graphic: iStock