Over the past week, Facebook and Instagram parent company, Meta, made headlines after publishing a blog post on 29 September, announcing Make-A-Video, a video-generating artificial intelligence (AI) system. According to Meta, the system “lets people turn text prompts into brief, high-quality video clips”. As with art-generating AI previously reported on Maverick Life, such as Midjourney and DALL-E, a user simply needs to describe a scene using text, and the AI goes to work to create a video of the scene.

This kind of generative technology has the potential to open new opportunities for creators, Meta says, adding that their system learns from publicly available datasets, using images that have descriptions to learn what the world looks like, as well as unlabelled videos to learn how the world moves.

Would-be users of Make-A-Video can also upload static images and the system will add movement to the images, effectively turning them into GIFS. Existing videos can also be uploaded for the system to create variations of the initial video. While the company describes the videos as “high quality”, most examples included in the blog post resemble the low-quality video from the early days of smartphone cameras. That said, the more realistically rendered videos do look like recordings of actual life events, albeit ones shot on a low-quality smartphone camera.

“Since Make-A-Video can create content that looks realistic, we add a watermark to all videos we generate. This will help ensure viewers know the video was generated with AI and is not a captured video,” Meta writes. As currently implemented, the “Meta AI” watermark is positioned on the bottom right corner of the videos.

Presumably, a user with access to basic video-editing software could easily crop the watermark out of the video.

Although Meta’s blog post puts the work they have been doing into the public domain, the system is not yet available to the general public. “Our goal is to eventually make this technology available to the public, but for now we will continue to analyse, test, and trial Make-A-Video to ensure that each step of release is safe and intentional,” they explain.

In saying that, Meta arguably hopes to allay fears from members of the public who are concerned that Make-A-Video could make it possible for users to create deep-fake videos with nefarious intentions to spread misinformation about featured subjects. However, another piece of technology, made publicly available just more than a week before Meta’s announcement, has beat the company to the uncanny valley by a mile.

“After months of hard work by our product development team, we are proud to announce the launch of our Creative Reality™ Studio. The first-of-its-kind self-service platform we released today enables anyone to easily generate high-quality customized presenter-led video from a single image,” the Israeli AI company, D-ID, wrote on a blog post published on 19 September 2022.

Creative Reality Studio allows the use of an image of a “presenter”, as well as written text, and the software does the rest to make the person in the image appear as though they uttered the words on video. Users can upload images of anyone as a “presenter” to create a video, or use images of the pre-selected presenters available on the website.

The platform currently supports 119 languages, including surprisingly good “digital human” isiZulu and Afrikaans. However, users of the free trial subscription or the $49/month Pro subscription have access to a limited selection of voices, accents and voice styles such as “angry”, “cheerful”, “sad” and “excited”. The top-of-the-line plan currently has no indication of how much it costs. Instead, where the price should be on the website, the company writes: “Let’s talk.” For these top-tier users, they promise: “You can clone any voice you have permission to use (at additional cost).”

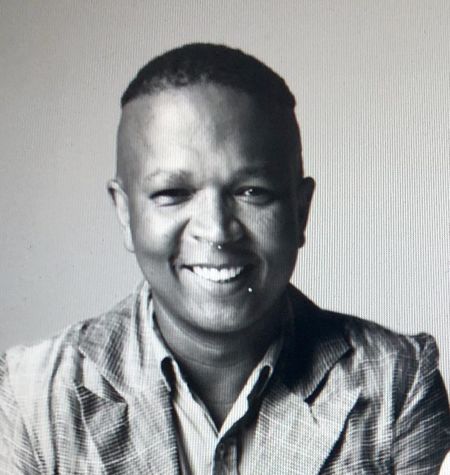

This writer tested out the free plan using an image of his editor, downloaded from a Google image search, and as a result, is now in possession of a video of her promising him a 1,000% pay rise. Unfortunately, without access to the “enterprise” plan and its voice-cloning permission, the promised fortune was delivered in an unconvincing accent distinctly different from hers. Sigh. Additionally, the free plan comes with a full-screen watermark that clearly identifies the video as AI. Paying Pro users, however, get the option of a “small watermark”, while enterprise users get a “custom watermark”.

D-ID is currently marketing the software as a tool to cut the costs that come with employing video professionals, by empowering “business content creators – L&D, HR, marketers, advertisers, and sales teams – to seamlessly integrate video in digital spaces and presentations, generating more engaging content and employing customised corporate video narrators. The platform dramatically reduces the cost and effort of creating corporate video content and offers an unlimited variety of presenters (versus limited avatars), including the users’ own photos or any image they have the rights to use.”

Predictably, the striking realism of the videos raises concerns about the possibility of fake videos being created by actors more nefarious than a mildly greedy writer trying to scam their editor. To that end, D-ID says that they have “partnered with leading privacy experts and ethicists to establish ethical guidelines and codes of conduct for this technology. This is a pledge we are making to bring transparency and fairness to our product and how we and our partners deploy them. Today, we are issuing a pledge for how we intend to operate our business in an ethical way, and also intend it as a call for others in the industry to adopt it.”

Among this list of pledges in the “ethics” section of their website, they promise that they “will strive to develop and use technology to benefit society”, and “will work hard to ensure that our customers are using our technology in ethical, responsible ways”; and where contentious issues are concerned, they will not “knowingly” license the use of their platform to political parties. Nor will they “knowingly work with pornography publishers or terrorist organisations, gun or arms manufacturers. Should we discover that such organisations are leveraging our technology, we will do everything legally within our power to suspend services,” and provided they are “legally allowed” and it is “technically possible”, they will conduct random audits of both original and generated materials that use their technology. DM/ML

In case you missed it, also read An artwork created using artificial intelligence wins competition… and causes uproar

https://www.dailymaverick.co.za/article/2022-09-13-an-ai-generated-image-wins-art-competition-and-causes-uproar/

Visit Daily Maverick's home page for more news, analysis and investigations

Image: Maxim Hopman / Unsplash

Image: Maxim Hopman / Unsplash