The suspicious tweets in South Africa were first picked up on October 30, 2016 and were investigated in earnest on November 3 and 4 after the public release of outgoing Public Protector Thuli Madonsela’s State of Capture report.

More than 100 fake Twitter accounts were tracked, all of them advancing three specific narratives; that the State of Capture report was “useless and rubbish”, that the media and Thuli Madonsela were “captured and biased” and that the real enemies of the people were “white monopoly capital” and specifically the Rupert family.

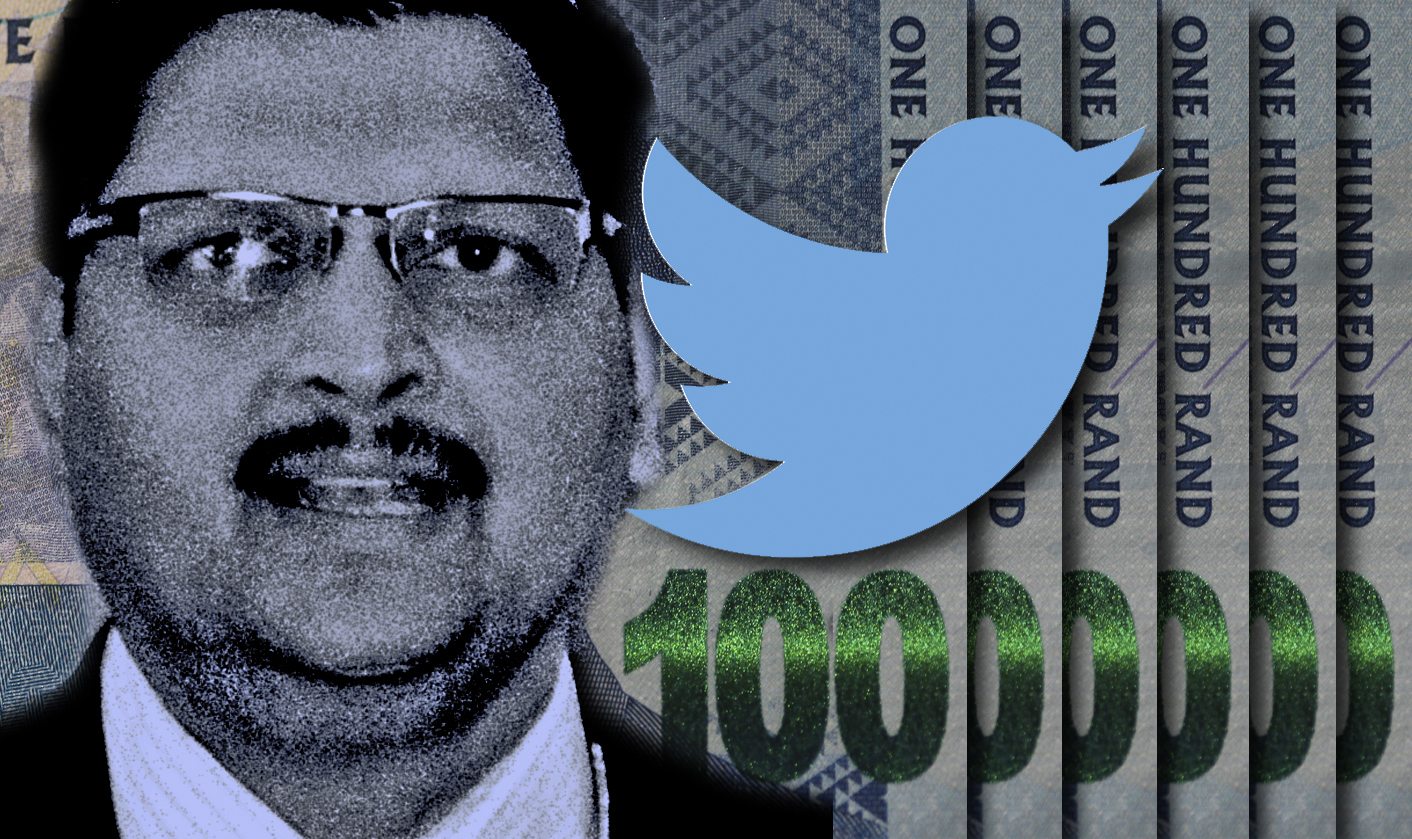

A researcher began tracking “suspicious tweets” from the accounts of Mzwanele (Jimmy) Manyi, President of the Progressive Professionals Forum and a staunch supporter of President Jacob Zuma, and Esethu Hasane, a former UCT student activist and current spokesperson for the Department of Sport and Recreation.

To determine who benefited from the tweets and retweets, the researcher analysed and tallied words that were retweeted, using a word cloud. The words most frequently used fell into three broad narratives – discredit, destroy and deflect. The most telling word used was #handsoffguptas which our researcher concluded clearly points to the Guptas being “beneficiated in this exercise”.

While the fake accounts are easy to spot, proving that they are fake required some effort and cyber sleuthing.

This is how it was done.

The researcher first became aware of the existence of these suspicious-looking accounts on the evening of October 30, 2016 after noticing that a posted tweet that had had 27 retweets, although none of these retweets actually commented in support of the reply.

Scrolling through some of the accounts it was immediately apparent that they were retweeting the same tweets in the same order. Their timelines looked identical, save for their twitter handles.

Finding the fakes

The accounts had to be manually located – a suspected fake account was selected, its timeline scrutinised, and posts and similar retweets identified. The researcher was bargaining on the fact that the fake accounts would sometimes RT a tweet that was not particularly popular, which would limit all of that tweet’s RTs to the fake accounts ONLY. The ideal tweet to look for would be an obscure user (i.e. not many followers, instead of a celebrity) with the same number of retweets. Examples are the following:

https://twitter.com/ranierpretoriu1/status/791631654993485824

https://twitter.com/ranierpretoriu1/status/791628301806829573

Or

https://twitter.com/tim_mosia/status/791622100117106688

https://twitter.com/tim_mosia/status/791622214445436929

Since the fake accounts cluster, it became easier once names were recognised and could all be “grabbed” simultaneously. The researcher soon began to identify the clusters in which they were operating as well as the characteristics of each account (they rarely have bio entries, and always use generic or stock profile pics – as an example @Anton_dj99 and @Evelynday999 have the same yellow motorbike as a profile picture). The main identifying factor was however the absence of their “own” tweets, and the overwhelming prevalence of retweets.

Linking the Liars

Once the list of suspect accounts had been identified the next step was to show that they are linked besides looking at their timelines and the order of their retweets. Twitter has an API (application programming interface) which enables the access of tweet-related data (this is mostly useful for marketing professionals and the like, and is used to identify key role players and influencers in networks). There is also a free analytics tool for Twitter, Twitter Archive Google Spreadsheet (TAGS), created by Martin Hawksey. The spreadsheet is created on Google Docs, and is then saved to Google Drive as a separate instance of the document. Linking a Twitter account to the spreadsheet enables access to its search and archive features.

The highlighted cells were instructive :–

From user (Blue): (just place @ in front to get their handle) indicates WHO tweeted.

Text (Red): indicates WHAT was tweeted.

Time (Purple): indicates WHEN it was tweeted.

Source (Green): indicates what device was used to tweet.

Other interesting columns are the geolocation co-ordinates (none of the accounts had this switched on) and the follower/following columns.

The Red and Orange highlights show that the different tweets were tweeted to the exact second. In addition, the Yellow highlights pointed to the fact that Tweetdeck was used. This was the first revelation the researcher had been under the impression these were “bot”, but Tweetdeck rather pointed to what is known as a “sockpuppet” account.

TAGS can create a summary page automatically, which includes plotting a timeline and activity graph for each user account in the Archive. This is where the pattern became very clear:

Again, Green highlights the username, which is self-explanatory.

The Blue highlight is the number of tweets made, and the Yellow shows the percentage of retweets. Red indicates the user’s activity over the period as a graph.

The activity pattern was identical – the accounts would all post their tweets at the same time, and stop tweeting at the same time. This is too similar for coincidence, and looking at the raw data one can see that the timestamps of the tweets are exactly the same. This was proof enough that the accounts were fake.

This is also when it became apparent that @ranierpretoriu1 is in control of the other accounts, since his was the only account that made “other” tweets in addition to the usual retweets, as indicated by the Orange column.

Down the Ranier Hole:

Using another online tool, Netlytic, which our researcher linked to his Twitter account, enabled him to compile datasets using search terms, which are updated automatically every 15 minutes. It is, however, even more restrictive than Twitter’s API, but it provides awesome analysis tools while looking at a smaller dataset. One of the tools is a word counter, and running @ranierpretoriu1 through this presented the following:

The Red “RT” was due to the number of times retweets were sent. It became apparent that @abrahamcpt19 was getting a high number of mentions. A searched for @abrahamcpt19 on Twitter yielded that he too had similar suspicious RTs, which led to the discovery of even more fake accounts.

It was now a case of finding the suspicious handles, running them through TAGS, and compiling a spreadsheet for each cluster of tweets. The clusters showed the following patterns:

One “normal” account was pulled in to indicate what “normal” tweeting behaviour looks like compared to the rest. An illustration is @MogaleMaeko – a genuine, real account.

Once this was done the researcher focused on each cluster’s main accounts, and compiled a search for them ONLY. This did not highlight much, except for the fact that @abrahamcpt19 and @ranierpretoriu1 were following similar patterns of tweeting, with the other accounts following different patterns at certain times. The peaks of each was however consistent (i.e. they tended to spike and decline at the same time, although the magnitude differed).

Searching for Sense:

A list of about 105 accounts or fake profiles was uncovered but in order to determine who was behind these the researcher explored who would benefit from what is being tweeted, and to do that one would in turn need to look at what was being retweeted.

While compiling the lists, the main narratives being presented by the tweets were threefold:

- The State of Capture report was baseless and rubbish;

- The media and Thuli Madonsela were themselves captured and biased;

- The real enemies are white monopoly capital and specifically, the Ruperts.

While our researcher had a pretty good idea where all this was pointing, he was not ready to make any claims without something more tangible to back this up. A single Excel spreadsheet using only the Text column (which showed what was tweeted, without looking at who or when) was created and the raw text pasted into a single word document. These 18,000 tweets provided 340,901 words spanning 1,219 pages. Even in a spreadsheet this was not easy to interpret, so our researcher made use of https://worditout.com/word-cloud/create to count and create the word cloud.

Again, the site had limitations – it would not consider special characters or numbers, and these had to be manually added to the words after the count was done. This however did not alter the word as it was counted, but only its appearance in the word cloud. And this is what emerged.

RT appeared a disproportionate number of times (considering the number of retweets) as well as “amp” (which appeared whenever a retweeted tweet was truncated and Twitter used the ampersand – & – sign to signify this). These were filtered out so as not to clutter the word cloud. The rest of the words could be slotted into the three earlier narratives identified while finding the fake tweet accounts – words like “Guptas” featured heavily, as well as StateCaptureReport, white, monopoly and capital, Thuli Madonsela, Jonas and media. The most telling tweet is the #handsoffguptas and #respectguptas hashtags which points to the Guptas as beneficiaries of this astroturfing.

The main accounts also had similar word contained in their tweets:

A matter which only became apparent after our researcher posted the tweets on Twitter was that Jennifer Lopez appeared on the word cloud. It was puzzling until another Twitter user pointed out that Lopez was due to perform at the (later cancelled) SATY Awards, and that ANN7 tweeted about this. With these accounts being so critical of the media, the retweet of ANN7 further points to a Gupta link for the tweets.

Last year The Guardian exposed working conditions for Putin’s “troll army” and at the time is was unclear, wrote the paper, whether the Saint Petersburg troll hub was the only one or whether there were others.

For now it appears as if South Africa has its very own troll hub that might very well be situated at Saxonwold or at least a well-known but mysteriously difficult-to-find shebeen nearby. DM

An earlier version of this piece described Esethu Hasane as a student activist. He has since graduated and is spokesperson for Sports Minister Fikile "Master of the Selfie" Mbalula).

Additional Links: